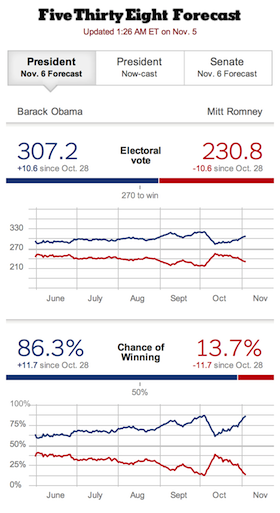

There’s been a lot of furor over the years about Nate Silver’s FiveThrityEight blog for predicting the outcome of U.S. elections using more math than mouth.

His critics usually include those who disagree with the predicted outcomes of his models and those who make their living crafting, um, less analytical narratives for the heated horse races of American politics. (You can read a brief history of FiveThrityEight and its controversies on Wikipedia. As noted elsewhere, there are many similarities with the Moneyball revolution in baseball.)

But I love his work — regardless of whether it favors my preferred candidate for any given race or not.

To me, Nate’s work in politics is analogous to the advancement of the analytical movement in marketing, with data scientists and marketing technologists increasingly influencing senior-level strategy and operations. In both worlds, this rise of this analytical worldview has made many of the “old guard” uncomfortable. But it’s hard to argue with results.

One of my favorite quotes is from statistician George E. P. Box, “All models are wrong, but some are useful.” And the history of Nate Silver’s models have proven to be quite useful in correctly calling the outcome of even close elections. In 2008, he correctly predicted the winner of the presidential contest in 49 out of 50 states and the winners of all 35 Senate races.

It’s interesting to note, by the way, that Nate’s models primarily use “small data” — the results of many polls, aggregated together. However, some of the analytical techniques that he employs tend to be associated with big data. Want to know how he does it?

You’re in luck, because Nate seems as excited to talk about the how as he is the what. For a popular blog — with millions of readers at the height of the political season — Nate doesn’t “dumb down” his analysis. He’s more than eager to carefully explain his methodology. In a world where statistics are often misused or misunderstood — see Should marketers be able to answer these 5 stats questions? — it’s refreshing to see this kind of public discourse.

Nate has a new book out, The Signal and the Noise: Why So Many Predictions Fail — But Some Don’t that I’m looking forward to reading. For now, I’ll simply quote the inside jacket, “Nate Silver examines the world of prediction, investigating how we can distinguish a true signal from a universe of noisy, ever-increasing data.”

Sounds like just my kind of bed-time reading. 🙂

P.S. If Nate’s models prove disastrously wrong tomorrow, then I realize that I’m going to eat some crow for this post. But I’ll throw my lot in with the math men.